After launching of Android O many developers and geekier want to know about their features. So do I introduces a variety of latest features and capabilities for users and developers. This document highlights what is new for developers.

you can check first developer preview here

Lets discuss with features and API of Android O

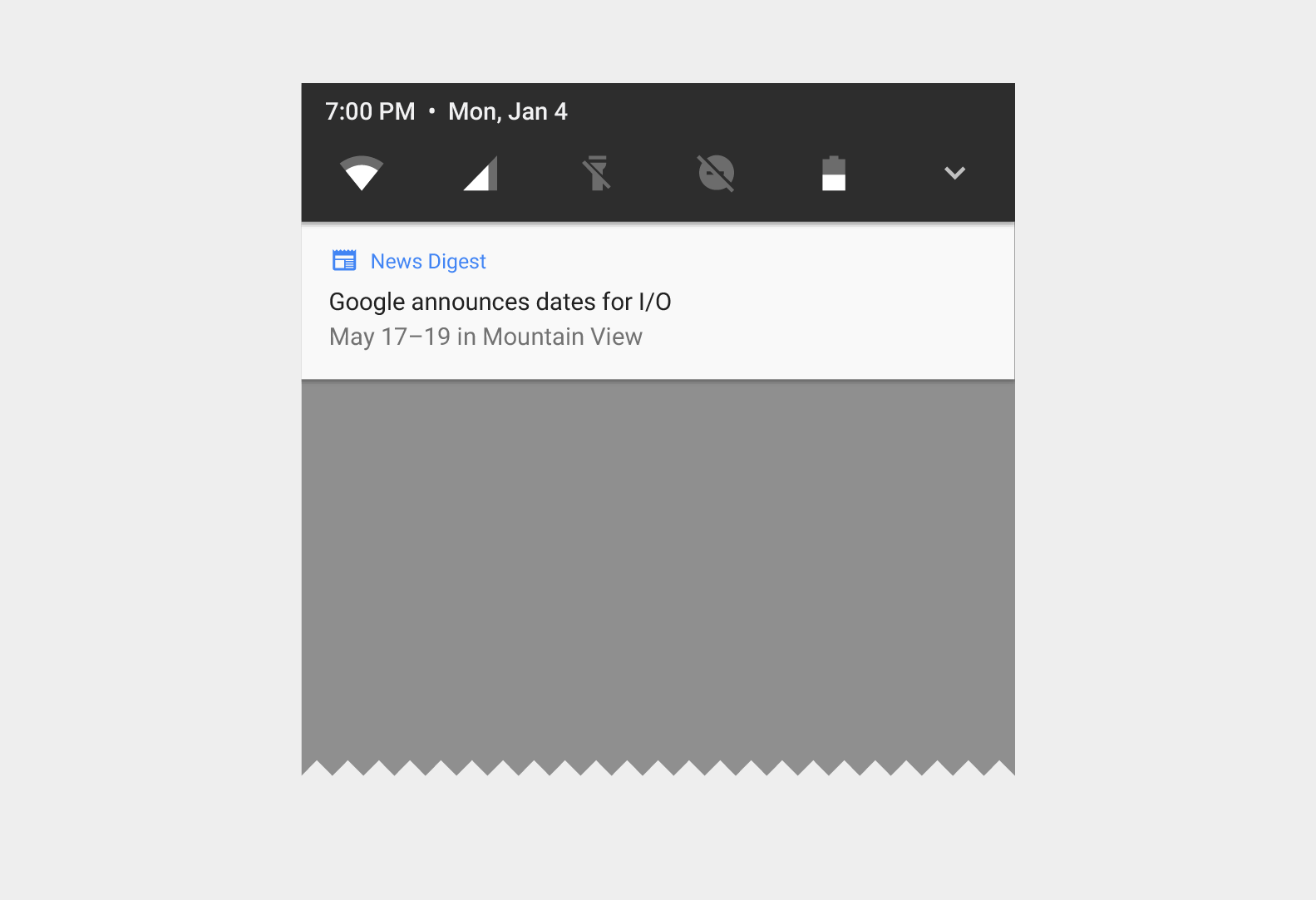

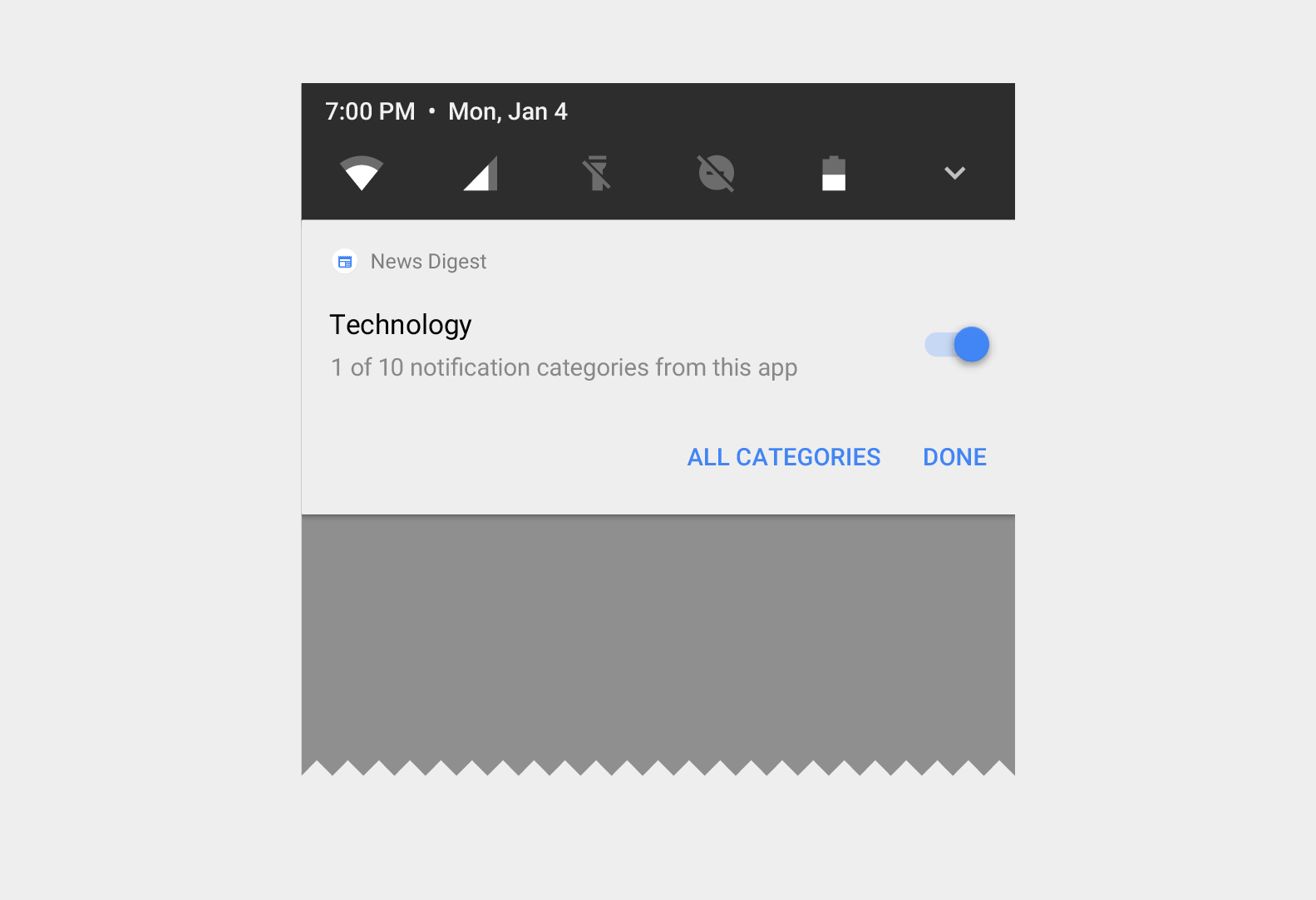

Notifications

In Android O, we’ve redesigned notifications to provide an easier and more consistent way to manage notification behavior and settings. These changes include:

- Notification channels: Android O introduces notification channels that allow you to create a user-customizable channel for each type of notification you want to display. The user interface refers to notification channels as notification categories. To learn how to implement notification channels, see the Notification Channels guide.

- Snoozing: Users can snooze notifications to reappear at a later time. Notifications reappear with the same level of importance they first appeared with. Apps can remove or update a snoozed notification, but updating a snoozed notification does not cause it to reappear.

- Notification timeouts: You can now set a timeout when creating a notification using Notification.Builder.setTimeout(). You can use this method to specify a duration after which a notification should be cancelled. If required, you can cancel a notification before the specified timeout duration elapses.

- Notification dismissal: The system now distinguishes whether a notification is dismissed by a user, or removed by an app. To check how notifications are dismissed, you should implement the new onNotificationRemoved() method of the NotificationListenerService class.

- Background colors: You can now set and enable a background color for a notification. You should only use this feature in notifications for ongoing tasks which are critical for a user to see at a glance. For example, you could set a background color for notifications related to driving directions, or a phone call in progress. You can also set the desired background color using Notification.Builder.setColor(). Doing so allows you to use Notification.Builder.setColorized() to enable the use of a background color for a notification.

- Messaging style: Notifications that use the MessagingStyle class now display more content in their collapsed form. You should use the MessagingStyle class for notifications that are messaging-related. You can also use the new addHistoricMessage() method to provide context to a conversation by adding historic messages to messaging related notifications.

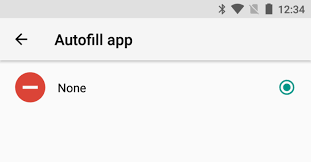

Autofill Framework

Users can save time filling out forms by using autofill in their devices. Android O makes filling forms, such as account and credit card forms, easier with the introduction of the Autofill Framework. The Autofill Framework manages the communication between the app and an autofill service.

Benefits

Filling out forms is a time-consuming and error-prone task. Users can easily get frustrated with apps that require these type of tasks. The Autofill Framework improves the user experience by providing the following benefits:

- Less time spent in filling fields Autofill saves users from re-typing information.

- Minimize user input errors Typing is prone to errors, especially in mobile devices. Removing the necessity of typing information also removes the errors that come with it.

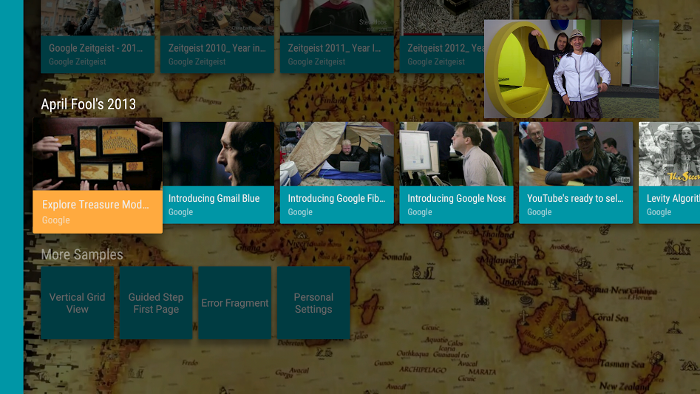

Picture-in-Picture mode

Android O allows activities to launch in picture-in-picture (PIP) mode. PIP is a special type of multi-window mode mostly used for video playback. PIP mode is already available for Android TV; Android O makes the feature available on other Android devices.

When an activity is in PIP mode, it is in the paused state, but should continue showing content. For this reason, you should make sure your app does not pause playback in its onPause() handler. Instead, you should pause video in onStop(), and resume playback in onStart(). For more information, see Multi-Window Lifecycle.

Benefits

Your app can decide when to trigger PIP mode. Here are some examples of when to enter PIP mode:

- Your app can move a video into PIP mode when the user navigates back from the video to browse other content.

- Your app can switch a video into PIP mode while a user watches the end of an episode of content. The main screen displays promotional or summary information about the next episode in the series.

- Your app can provide a way for users to queue up additional content while they watch a video. The video continues playing in PIP mode while the main screen displays a content selection activity.

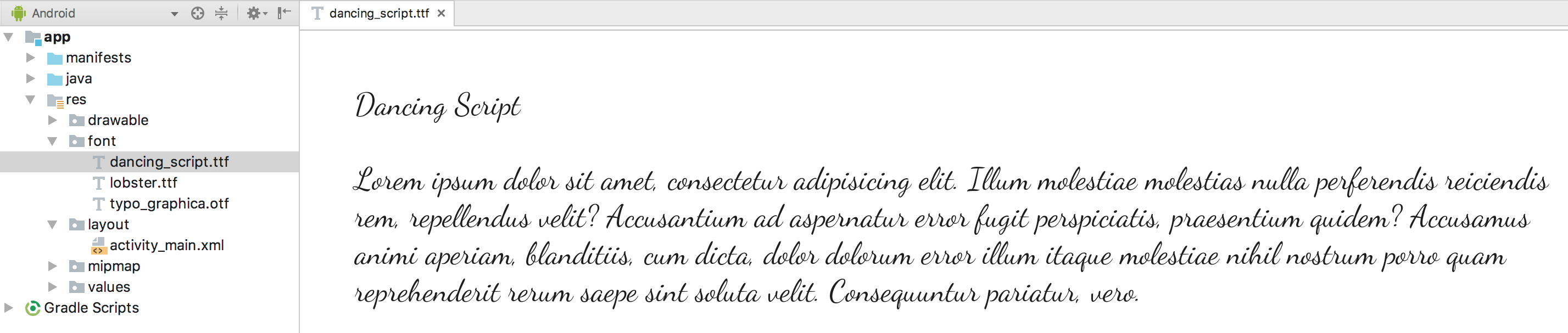

Working with fonts

Android O introduces a new feature, Fonts in XML, which lets you use fonts as resources. This means, there is no need to bundle fonts as assets. Fonts are compiled in R file and are automatically available in the system as a resource. You can then access these fonts with the help of a new resource type, font.

Android O also provides a mechanism to retrieve information related to system fonts and provide file descriptors. For more information, about using fonts as resources and retrieving system fonts, see Working with fonts.

Adaptive icons

Android O introduces adaptive launcher icons. Adaptive icons support visual effects, and can display a variety of shapes across different device models.For example, a launcher icon can display using a circular shape on one OEM device, and display a squircle on another device. Each device OEM provides a mask, which the system then uses to render all icons with the same shape.

The new launcher icons are also used in shortcuts, the Settings app, sharing dialogs, and the overview screen.

Color management

Android developers of imaging apps can now take advantage of new devices that have a wide-gamut color capable display. To display wide gamut images, apps will need to enable a flag in their manifest (per activity) and load bitmaps with an embedded wide color profile (AdobeRGB, Pro Photo RGB, DCI-P3, etc.).

Wi-Fi Aware

Android O adds support for Wi-Fi Aware, which is based on the Neighbor Awareness Networking (NAN) specification. On devices with the appropriate Wi-Fi Aware hardware, apps and nearby devices can discover and communicate over Wi-Fi without an Internet access point. We’re working with our hardware partners to bring Wi-Fi Aware technology to devices as soon as possible.

WebView APIs

Android O provides several APIs to help you manage the WebView objects that display web content in your app. These APIs, which improve your app’s stability and security, include the following:

- Version API

- Google SafeBrowsing API

- Termination Handle API

- Renderer Importance API

Accessibility

Android O supports the following accessibility features for developers who create their own accessibility services:

Language detection

To identify the languages that the Text-to-Speech (TTS) tool has identified within a range of text, use detectLanguages(). This method appears in the TextClassificationManager class, which has been introduced in Android O. You can use the resulting list of TextLanguage objects to identify which areas of text have been assigned to a particular language as well as how confidently TTS has assigned a language to a particular subset of text.

Accessibility button

Your service can request that an accessibility button appear within the system’s navigation area by setting the FLAG_REQUEST_ACCESSIBILITY_BUTTON flag within the android:accessibilityFlags attribute. This button offers users a quick way to activate your service’s functionality from any screen on the device. Your service can register button interaction callbacks using registerAccessibilityButtonCallback().

Note:

This feature is available only on devices that provide a software-rendered navigation area. Use the isAccessibilityButtonAvailable() method and the onAvailabilityChanged() callback to keep track of the accessibility button’s availability. Ensure that users of your service have alternate ways to access related functionality in cases where the accessibility button is unavailable.

Fingerprint gestures

Your accessibility service can also respond to an alternative input mechanism, directional swipes (up, down, left, and right) along a device’s fingerprint sensor. To receive callbacks about these interactions, complete the following sequence of steps:

- Declare the USE_FINGERPRINT permission and the CAPABILITY_CAN_CAPTURE_FINGERPRINT_GESTURES capability.

- Set the FLAG_CAPTURE_FINGERPRINT_GESTURES flag within the android:accessibilityFlags attribute.

- Register for callbacks using registerFingerprintGestureCallback().

Keep in mind that not all devices include fingerprint sensors. You can use the isHardwareDetected() method to identify whether a device supports the sensor. Even on devices that include a fingerprint sensor, your service can use the sensor only when it’s not in use for authentication purposes. To identify when the sensor is available, call the isGestureDetectionAvailable() method and implement the onGestureDetectionAvailabilityChanged() callback.

Word-level highlighting

To determine the locations of visible characters in a TextView object, you can pass in EXTRA_DATA_TEXT_CHARACTER_LOCATION_KEY as the first argument into refreshWithExtraData(). A Bundle object, which you provide as the second argument to refreshWithExtraData(), is then updated to include a parcelable array of Rect objects. Each Rect object represents the bounding box of a particular character.

If your service uses a TextToSpeech object to dictate the content that appears on-screen, you can obtain more precise timing information about when text-to-speech engines begin speaking individual synthesized words. When an engine expects to begin playing audio for a specific range of text, the text-to-speech API notifies your service that speech for the range of text is beginning using the onUtteranceRangeStart() callback.

If you create your own implementation of TextToSpeechService, you can support this new functionality by using the rangeStart() method.

Hint text

Your service can access the hint text of an EditText object using the getHintText() method within the AccessibilityNodeInfo class. Even if a particular EditText object isn’t currently displaying hint text, the getHintText() method still provides hint text for your service.

Continued gesture dispatch

Your service can now specify sequences of strokes that belong to the same programmatic gesture by using the final argument, isContinued, in the GestureDescription.StrokeDescription constructor.

Share your thoughts