Machine learning is the idea that there are generic algorithms that can tell you something interesting about a set of data without you having to write any custom code specific to the problem. Instead of writing code, you feed data to the generic algorithm and it builds its own logic based on the data.

Today we’re going see the Top 10 Open source projects can be useful for programmers.

Hope you find an interesting Machine Learning Open Source project that inspires you.

Detectron

FAIR’s research platform for object detection research, implementing popular algorithms like Mask R-CNN and RetinaNet.

It is Facebook AI Research’s software system that implements state-of-the-art object detection algorithms, including Mask R-CNN. It is written in Python and powered by the Caffe2 deep learning framework.

See this also : Top 3 Mobile Technology Trends in 2018

FaceSwap

A tool that utilizes deep learning to recognize and swap faces in pictures and videos.

The project has multiple entry points. You will have to:

- Gather photos (or use the one provided in the training data provided below)

- Extract faces from your raw photos

- Train a model on your photos (or use the one provided in the training data provided below)

- Convert your sources with the model

You can see this also : Top 10 Programming Architectural Patterns

Gradient-checkpointing

Make huge neural nets fit in memory.

Training very deep neural networks requires a lot of memory. Using the tools in this package, developed jointly by Tim Salimans and Yaroslav Bulatov, you can trade off some of this memory usage with computation to make your model fit into memory more easily. For feed-forward models we were able to fit more than 10x larger models onto our GPU, at only a 20% increase in computation time.

Recommended article : Top 10 Android Database Libraries

Lime

Explaining the predictions of any machine learning classifier.

This project is about explaining what machine learning classifiers (or models) are doing. At the moment, we support explaining individual predictions for text classifiers or classifiers that act on tables (numpy arrays of numerical or categorical data) or images, with a package called lime (short for local interpretable model-agnostic explanations). Lime is based on the work presented in this paper (bibtex here for citation).

You should see : Top 5 Android Wear Library In 2018

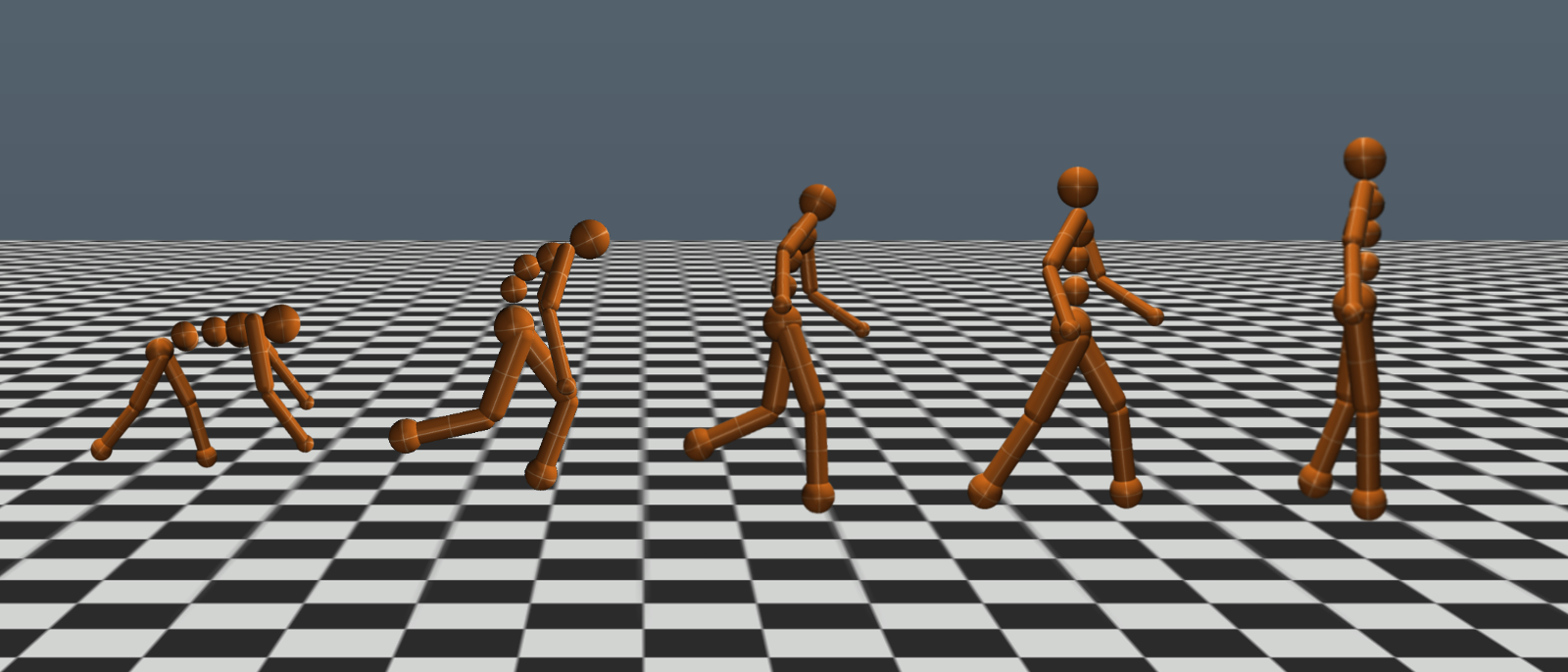

Dm_control

The DeepMind Control Suite and Control Package.

This package contains:

- A set of Python Reinforcement Learning environments powered by the MuJoCo physics engine. See the

suitesubdirectory. - Libraries that provide Python bindings to the MuJoCo physics engine.

For Pro Dev : Top 12 Advanced Kotlin Tips For Pro Developers

Psychlab

Experimental paradigms implemented using the Psychlab platform (3D platform for agent-based AI).

Psychlab recreates the set-up typically used in human psychology experiments inside the virtual DeepMind Lab environment. This usually consists of a participant sitting in front of a computer monitor using a mouse to respond to the onscreen task. Similarly, our environment allows a virtual subject to perform tasks on a virtual computer monitor, using the direction of its gaze to respond. This allows humans and artificial agents to both take the same tests, minimising experimental differences. It also makes it easier to connect with the existing literature in cognitive psychology and draw insights from it.

Deepj

A deep learning model for style-specific music generation.

Recent advances in deep neural networks have enabled algorithms to compose music that is comparable to music composed by humans. However, few algorithms allow the user to generate music with tunable parameters. The ability to tune properties of generated music will yield more practical benefits for aiding artists, filmmakers, and composers in their creative tasks.

Simple

Experimental Global Optimization Algorithm

Simple is a radically more scalable alternative to Bayesian Optimization. Like Bayesian Optimization, it is highly sample-efficient, converging to the global optimum in as few samples as possible. Unlike Bayesian Optimization, it has a runtime performance of O(log(n)) instead of O(n^3) (or O(n^2) with approximations), as well as a constant factor that is roughly three orders of magnitude smaller.

Simple’s runtime performance, combined with its superior sample efficiency in high dimensions, allows the algorithm to easily scale to problems featuring large numbers of design variables.

Deep-neuroevolution

AI Labs Neuroevolution Algorithms

In the field of deep learning, deep neural networks (DNNs) with many layers and millions of connections are now trained routinely through stochastic gradient descent (SGD). Many assume that the ability of SGD to efficiently compute gradients is essential to this capability.

NPMT

Towards Neural Phrase-based Machine Translation

Neural Phrase-based Machine Translation (NPMT) explicitly models the phrase structures in output sequences using Sleep-WAke Networks (SWAN), a recently proposed segmentation-based sequence modeling method. To mitigate the monotonic alignment requirement of SWAN, we introduce a new layer to perform (soft) local reordering of input sequences. Different from existing neural machine translation (NMT) approaches, NPMT does not use attention-based decoding mechanisms. Instead, it directly outputs phrases in a sequential order and can decode in linear time.

[td_smart_list_end]

That’s it for Machine Learning Monthly Open Source. If you liked this article, please consider Sharing up for my Machine Learning is Fun! Have fun.

Share your thoughts